When you ask ChatGPT to summarize a 500-page report or generate a 4K AI video, a silent, global ballet of electrons unfolds. Thousands of specialized chips in data centers kick into action, moving terabytes of data in milliseconds to power your request. Behind every generative AI response lies a hidden hardware backbone—and by 2026, the chip demands of models like ChatGPT have evolved far beyond early LLM limitations.

Microsoft’s multi-billion dollar partnership with OpenAI has now entered a deeper phase, with Azure infrastructure heavily customized to accelerate OpenAI’s next-generation foundation models. Even amid OpenAI’s new funding and partnerships with Amazon, Nvidia, and SoftBank, the core alliance remains unchanged: Microsoft retains exclusive IP rights, and Azure remains the sole cloud provider for all stateless OpenAI APIs (OpenAI, 2026). Google, Baidu, and other tech giants have also scaled their generative AI deployments, making chip optimization for these models one of the most critical tech priorities of the era.

What Are 2026 Generative AI Models?

Generative AI relies on massive pre-training and high-performance language models as its core, learning from global corpora to understand prompts and generate human-like text, images, or video. Today’s models split into two core categories, with entirely new hardware demands:

- Language Generation Models

Led by ChatGPT’s latest iterations (GPT-5/Orion), modern LLMs have moved far beyond simple dense architectures. They now employ Mixture-of-Experts (MoE) structures, with trillions of total parameters (e.g., 1.8T for GPT-5) but only a small fraction (e.g., 24B) activated per query (CSDN, 2026). This shift doesn’t just demand more raw computing power—it rewrites the rules for chip memory and data routing. By 2026, over 60% of mainstream large models have adopted MoE or similar architectures, making sparse activation the industry standard (Xinhua News Agency, 2026).

- Image & Video Generation Models

Diffusion-based models (DALL-E 3, Stable Video Diffusion, Google Imagen Video) dominate this space. Unlike early image generators, 2026’s tools produce minute-long 4K video and ultra-high-resolution images, using language models only to interpret prompts (with hundreds of millions of parameters) while focusing compute on visual generation. Open-source models like LTX-2, for example, can generate native 4K video at up to 50 FPS, even on high-end consumer GPUs (Lightricks, 2026).

By 2026, the single biggest hardware challenge for these models isn’t computing power—it’s the memory wall: the gap between soaring chip compute speed and the slower speed of moving and storing data. This bottleneck defines every requirement for ChatGPT and its peers.

Core Chip Requirements for 2026 ChatGPT & Generative AI

Every hardware demand ties back to breaking the memory wall—distributed computing, memory capacity, and raw compute all serve this single goal.

1. Distributed Computing: Breaking the Memory Wall at Scale

LLMs with MoE architectures are far too large for single-machine processing, making distributed computing non-negotiable. The real bottleneck here isn’t compute—it’s data interconnection.

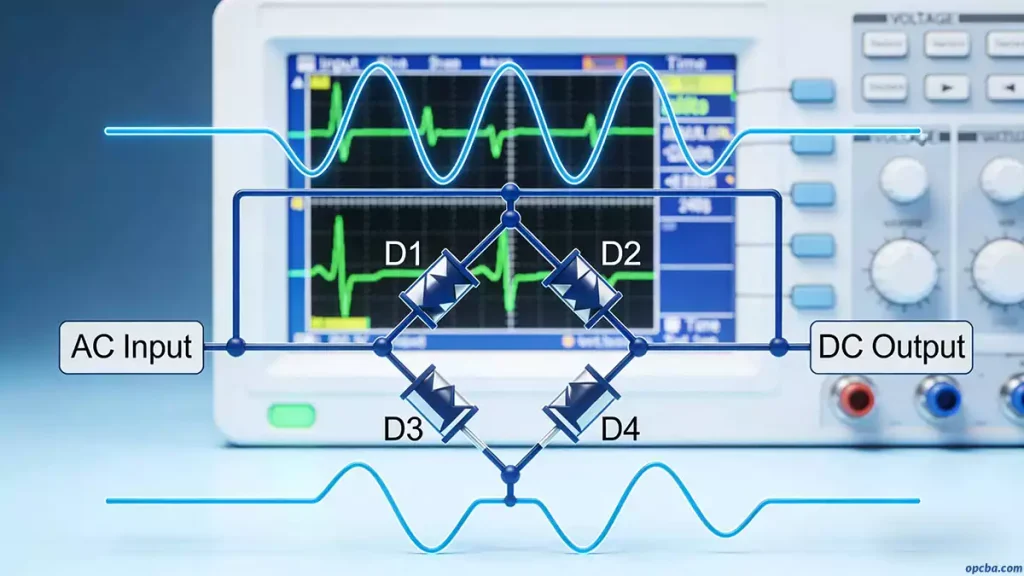

High-bandwidth inter-chip connectivity (like RDMA) is critical. Think of it as a high-speed conveyor belt between chefs: if a thousand chips are cooking one massive recipe together, they need to pass ingredients (data) instantly. In large-scale distributed AI training, this high-speed data transmission depends on High Speed PCB design to minimize latency and signal loss. RDMA bypasses the slow, central pantry (the CPU) to enable near-instant data sharing, eliminating delays that cripple large-model performance.

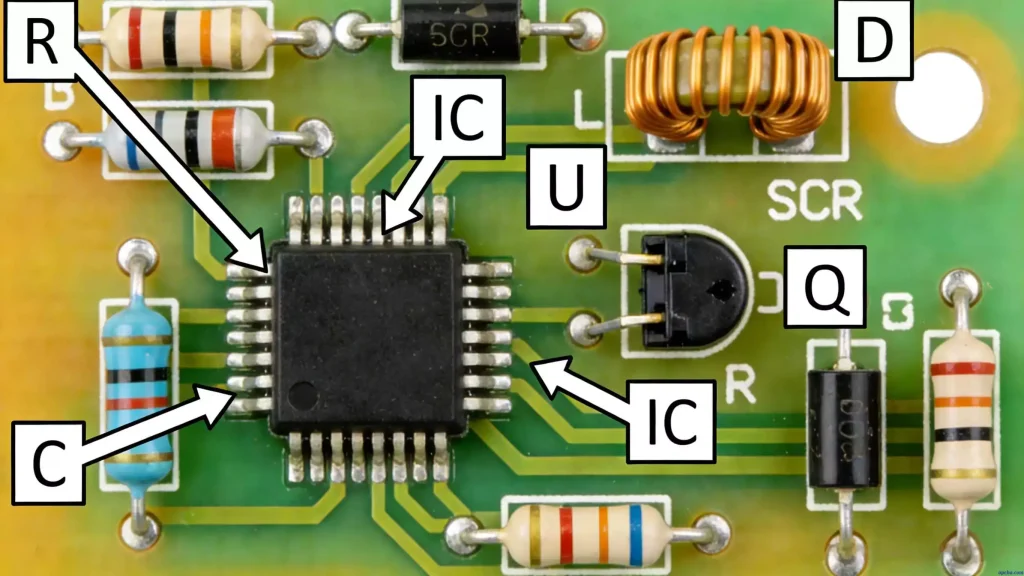

For 2026 generative AI, chips must natively support this kind of high-speed distributed architecture—no exceptions. The dense packaging of these AI chips and the assembly of data center server motherboards rely on advanced SMT Surface Mount Technology to ensure precise component soldering and signal integrity.

2. Memory Capacity & Bandwidth: The Heart of the Memory Wall

Even with distributed systems, each chip’s local memory is pushed to its limit, making HBM (High-Bandwidth Memory) the non-negotiable standard for AI accelerators.

- Early image models fit in ~20GB of memory, but 2026’s video-generation models and MoE LLMs require multi-layer HBM stacks with expanded capacity and speed.

- New technologies like CXL and optimized software further expand usable memory, but the core challenge remains: feeding data to compute cores fast enough to avoid idle hardware.

This is the memory wall in action: even the most powerful compute chips are useless if they can’t access parameters and data quickly enough. When designing high-speed PCBs for AI servers, professional tools like Eagle PCB help engineers optimize routing and minimize signal interference.

3. Computing Power: Scaled for 4K Video & MoE LLMs

Compute demand has exploded since early generative AI models:

- While early diffusion models required ~20 TFLOPS, today’s state-of-the-art video generation models—producing minute-long 4K clips—demand sustained performance in the petaFLOP range during training and hundreds of TFLOPS for interactive inference.

- MoE language models rely on matrix calculations, but their sparse activation means compute efficiency matters more than raw FLOPS. For example, GPT-5’s 1.8T-parameter MoE architecture reduces inference costs by 70% and speeds up processing by 3x compared to dense models (Toutiao, 2026).

In short, 2026 generative AI chips can’t excel at just one thing—they need balanced distributed computing, memory, and compute to break the memory wall.

GPUs vs. Custom AI Chips: 2026 Market Reality

The 2023–2024 prediction that “AI chips would catch up to GPUs” is now a present-day reality. Here’s the current landscape:

For Language Models (ChatGPT)

NVIDIA’s GPUs remain dominant thanks to their mature CUDA software stack and Triton distributed computing framework, which splits MoE models across multiple GPUs seamlessly. Matrix multiplication—core to LLM compute—remains a GPU strength, making them the go-to for early-stage model development. However, cloud giants like Google and AWS are aggressively shifting to custom silicon to reduce costs.

For Image & Video Generation

Custom AI chips have overtaken GPUs in specific workloads. Cloud giants’ custom silicon (Google TPU v8, AWS Trainium3) and startup-designed chips deliver superior TCO (Total Cost of Ownership) for large-scale video generation, with optimized on-chip memory and convolution acceleration tailored to visual models. For example, AWS Trainium3 provides 2.52 PFLOPS of FP8 compute, with 4x better energy efficiency than its predecessor (AWS, 2026).

2026 Final Verdict

While NVIDIA’s GPUs remain a dominant force due to their mature software stack (CUDA), the long-predicted rise of specialized AI chips has now materialized. According to TrendForce, the share of ASICs in AI servers is expected to jump from 20.9% in 2025 to 27.8% in 2026, while GPU share shrinks from 75.9% to 69.7% (Nasdaq, 2026). Custom silicon from cloud giants (Google TPU, AWS Trainium) and startups now offers superior total cost of ownership (TCO) for specific generative AI workloads, particularly in large-scale training clusters. Google, for instance, projects that TPUs will account for nearly 78% of its AI server shipments in 2026 (EMSNow, 2026).

The real winners in 2026 aren’t the chips with the most TFLOPS—they’re the chips that solve the memory wall best, moving data faster and more efficiently than ever before.